Model Mania: Why Do We Need Both The GFS and ECMWF Models?

Hello everyone!

This post is the fourth in our Model Mania series which hopes to give a brief introduction to weather models for those without a rigorous atmospheric science background. In the previous three posts, I discussed the basics of weather models, why there are two general types of weather models (regional and global) and how they’re different, and some of the key differences between the ECMWF and GFS global models. Given the fact that the GFS is consistently less skillful than the ECMWF, and both models require considerable investments of time and money to maintain, it’s a fair question to ask why we even bother with the GFS. Why not just use the ECMWF if it’s better? This post will argue that both models play a crucial role in weather forecasting, and that the time and money it takes to run both is well-spent.

The first and perhaps most important reason not to just ditch the GFS is that sometimes it actually is more accurate than the ECMWF. I gave a couple examples in the last post that are worth repeating.

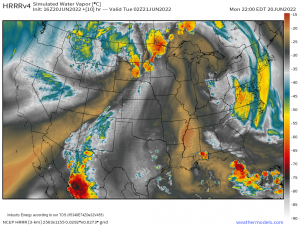

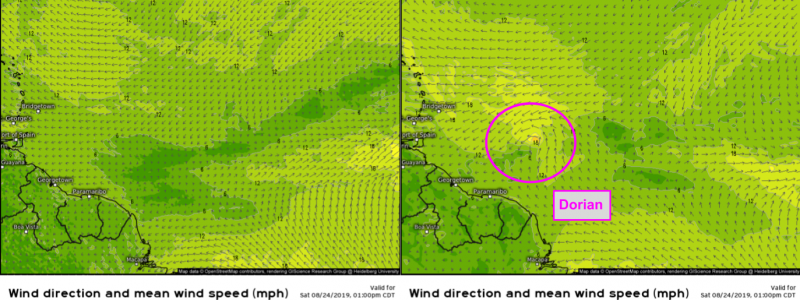

A notable recent example is the formation of Tropical Storm (later Hurricane) Dorian in August 2019. Medium range forecasts (3-6 days) from the GFS model were fairly consistent in showing the formation of a tropical cyclone from a disturbance over the tropical Atlantic ESE of Barbados. The ECMWF model was fairly consistent in predicting that this disturbance would fail to organize sufficiently to become a tropical cyclone until just a couple days before advisories on Dorian were initiated by the National Hurricane Center. While there was still plenty of warning for residents in the Bahamas and the US regarding Dorian’s impacts, additional lead time on TC formation is incredibly important, especially when storms are forming much closer to land.

Another noteworthy example comes from January of 2015 when the GFS correctly predicted a relatively modest snowstorm for New York City while the ECMWF was forecasting a blockbuster blizzard. That event occurred before we began archiving model data in 2017 but you can read a bit more about it here. There have been many other cases where the GFS’ predictions for a specific storm system have turned out to be superior to those made by the ECMWF, but most of the time the differences are hardly noticeable and don’t make front-page news, like the ECMWF’s high profile forecasting “victories” do.

Even when the GFS doesn’t end up with a prediction as accurate as those made by the ECMWF, it is still adding value to the forecast process. Due to the immense complexity of the atmosphere, forecasts of powerful storms, either hurricanes or extratropical cyclones, are extremely sensitive to very small changes in how different disturbances are handled. Having two models running at the same time, producing different outcomes, is extremely important to establishing what effect any given disturbance might have on the eventual track/intensity of the storm.

Here’s an example from March of 2018 which illustrates the value of having two different models.

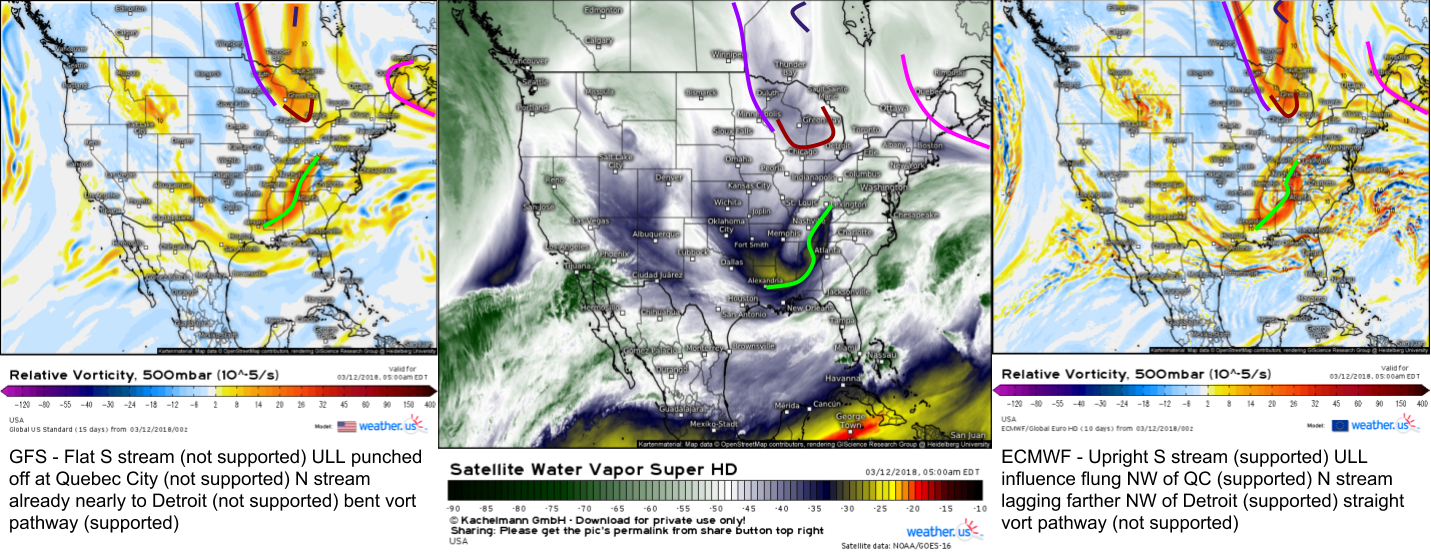

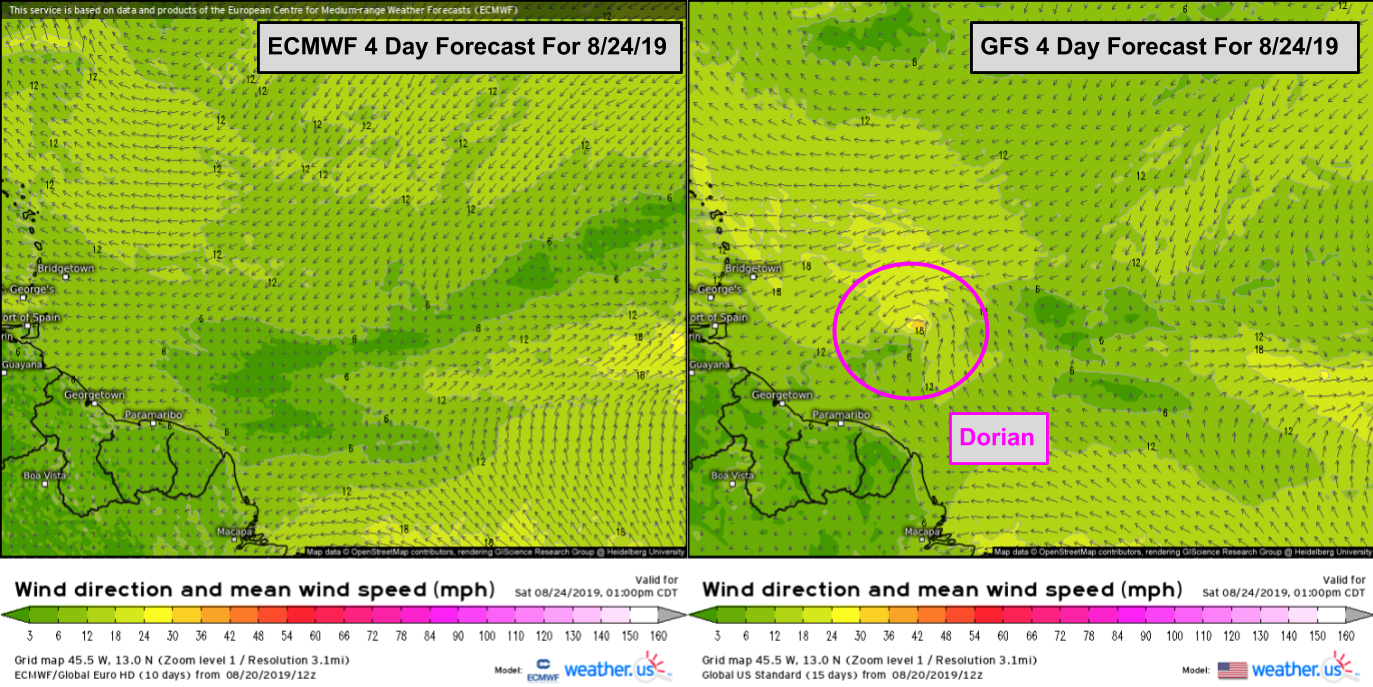

The left map shows the GFS’ forecast for 500mb vorticity about 12 hours before a potential storm off the East Coast while the right map shows the ECMWF’s forecast for the same parameter at the same time. The middle map is WV satellite imagery for the same time (5 AM EST 3/12/18). Because WV satellite imagery “sees” features at roughly 500mb (it’s actually more like 400mb, but it’s a pretty good approximation), each model can be compared against reality to see which has a better handle on what’s happening now. Whichever model has a better handle on what’s happening now will generally do better with the forecast. In this case, the GFS was forecasting a much smaller storm than the ECMWF. By comparing both forecasts with WV imagery, it became clear that the ECMWF was doing a much better job handling the relevant disturbances over the Great Lakes and Southeastern US than the GFS, which added confidence to the ECMWF’s forecast for a big storm. The storm ended up being a blockbuster for interior parts of the Northeast, I measured over two feet in the mountains of Maine!

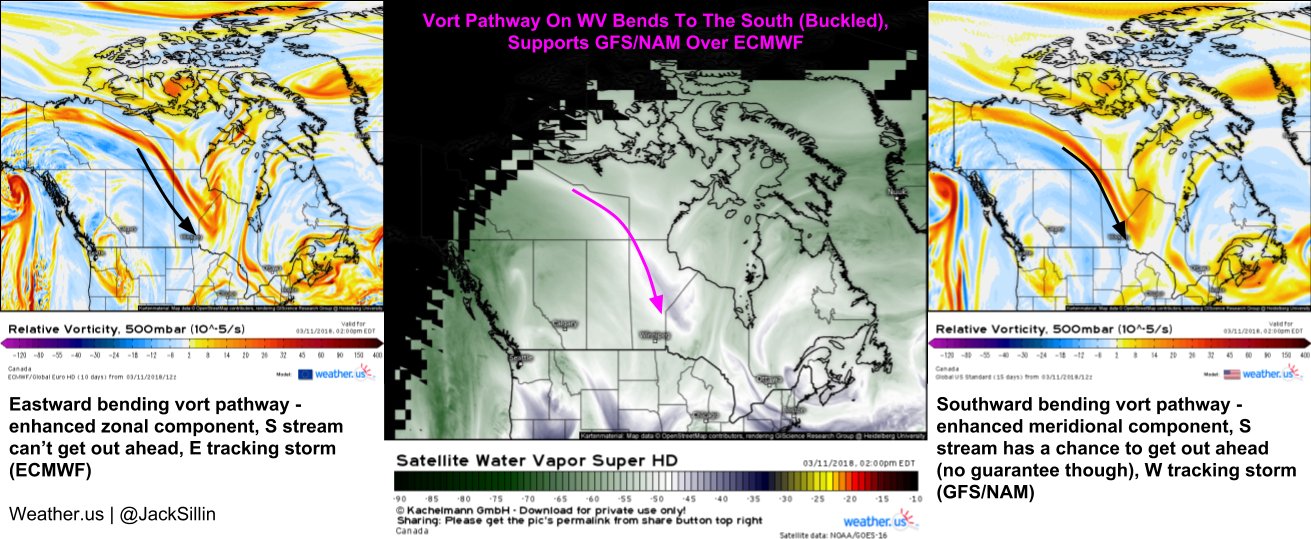

Interestingly, a couple days before the maps I showed above, the GFS was predicting the bigger storm while the ECMWF barely had a storm at all. By comparing how each model handled a disturbance over the Canadian Prairies, I (and many others) was able to have confidence in the prediction of a big storm, even though the typically more accurate ECMWF was forecasting little more than flurries.

Any time a big storm is forecast along the East Coast, a weaker or “out to sea” solution is always part of the range of possible outcomes. Having one model (in this case the GFS) represent that scenario while another (in this case the ECMWF) represents the “big storm” scenario is valuable because the GFS’ faulty handling of disturbances over the Great Lakes relative to the ECMWF provided evidence for discarding the out to sea solution. If we only had the ECMWF model, it would be much harder to substantiate a forecast for big snow, because we know there will be cases when the ECMWF is wrong. How would we know our current situation won’t be one like NYC in January 2015? Each model is important not only because it provides a (fairly good) forecast, they also help expose each other’s weaknesses, allowing forecasters to make a more informed and accurate forecast.

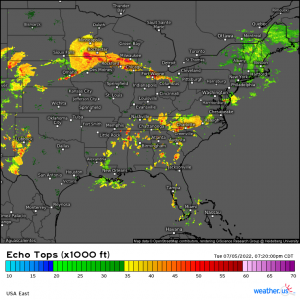

While I used a New England snowstorm as my example in this post, the same logic applies to just about any medium-range forecast predicament. I could’ve just as easily picked a tricky hurricane, severe thunderstorm outbreak, or West Coast atmospheric river prediction to make the same points.

In addition to the practical forecast applications, the GFS is also valuable because of its open access and support of many other specialized models. Anyone can tap into the stream of data generated by the GFS to make their own forecasts, or support their own regional models. All regional models need boundary conditions (information about what’s happening outside their limited area of interest, known as a domain). For example, if you’re running a regional model for Florida to predict sea breeze thunderstorms, you need something to tell your model if a hurricane forming hundreds of miles away in the Atlantic will impact the state. Without these boundary conditions, your model would keep calling for calm weather with scattered afternoon thunderstorms even as the hurricane was bearing down.

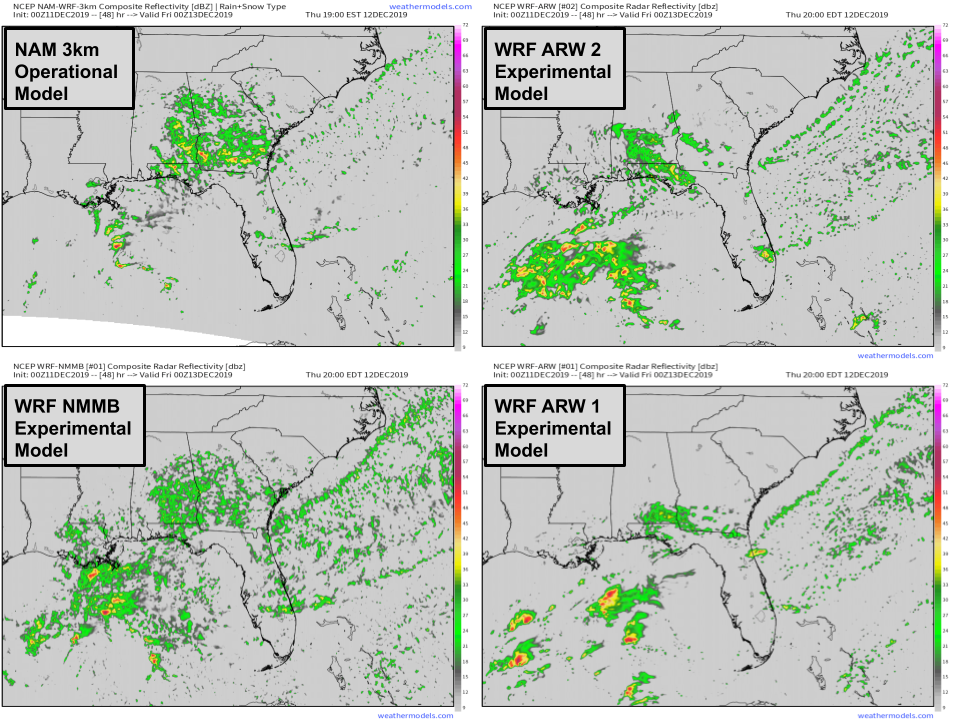

Because access to ECMWF data is so expensive, most regional models and nearly all experimental regional models run by university groups or other organizations trying to test the latest modeling innovations are run with boundary conditions supplied by the GFS. Operational regional models are useful because they can forecast smaller scale features “invisible” to global models, as I discussed in the second post of this series. Experimental regional models likely won’t produce very accurate forecasts, but they’re valuable because they’re “labs” of sorts where the latest and greatest in modelling procedures (remember the governing equations I mentioned in the first post of this series? We’re still trying to figure out better versions of these equations to make predictions more accurate) can be tested in a “real world” environment. Without the GFS to provide the boundary conditions for these experimental forecasts, it would be much harder to run these experimental models, and thus the pace of progress towards better weather models would slow.

Hopefully this post has convinced you that despite its generally lower skill, the GFS model still has substantial value and is worth the time and money it takes to run and maintain. It can and does outperform the ECMWF in some individual storm predictions, and even when it doesn’t, knowing why it’s predicting a different outcome than the ECMWF and why that outcome is wrong (by comparing to observations) helps add confidence in the ECMWF’s predictions. Additionally, having an open-access global model helps support a wide array of operational regional models that have tremendous forecast usefulness, as well as experimental regional models that help drive the science of weather modeling forward.

The final post in our Model Mania series will discuss the limitations of weather models and why it’s still important to consult with human forecasters, especially in high-consequence situations.

-Jack